Processing pipelines in spaCy

Let’s look at the pipeline functions provided by spaCy . This will continue from the previous article Large scale data analysis with spaCy .

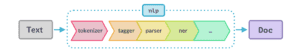

What happens when we call nlp?

doc = nlp("Hello world!")

First, the text is transformed into a Doc object by the tokenizer . Then a series of pipeline components is applied to the Doc in order to process it and set attributes. Finally, the processed Doc is returned.

Built-in pipeline components

A pipeline component is a function or callable that takes a doc , modifies and returns it, so that it can be processed by the next component in the pipeline.

| Name | Description | Creates |

|---|---|---|

| tagger | Part-of-speech tagger | Token.tag |

| parser | Dependency parser | Token.dep, Token.head,Doc.sentsDoc.Doc.nounchunksnounchunks |

| ner | Named entity recognizer | Doc.ents, Token.entiob, Token.enttype. |

| textcat | Text classifier | Doc.cats |

NOTE: The text classifier is not included in any of the pre-trained models by default as categories are very specific.

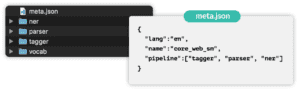

Under the hood

- Pipelines are defined in model’s

meta.jsonin order - Built-in components need binary data to make predictions.

The data is included in the model package and loaded into the component when we load the model.

Pipeline attributes

nlp.pipe_names: list of pipeline component namesnlp.pipeline: list of(name, component)tuples

import spacy

nlp = spacy.load("en_core_web_md")

print(nlp.pipe_names)

print(nlp.pipeline)

OUTPUT

['tagger', 'parser', 'ner']

[('tagger', <spacy.pipeline.pipes.Tagger object at 0x7fd284a8bda0>), ('parser', <spacy.pipeline.pipes.DependencyParser object at 0x7fd23a74aca8>), ('ner', <spacy.pipeline.pipes.EntityRecognizer object at 0x7fd23a74ad08>)]

Custom pipeline components_58

We can add custom functions to the pipeline which then gets executed when we call the nlp object on a text.

Custom functions can be used to add our own metadata to documents and tokens.

They can also be used to update built-in attributes such as doc.ents

To add a component to the pipeline, use nlp.add_pipe method. This function takes the component to add as the first positional argument. It has also the following keyword arguments that can be used optionally to determine the position of the component in the pipeline:

| Argument | Description | Examples |

|---|---|---|

| last | If True ,add last | nlp.add_pipe(component, last=True) |

| First | If True ,add first | nlp.add_pipe(component, First=True) |

| Before | Add before component | nlp.add_pipe(component, Before=True) |

| After | Add After component | nlp.add_pipe(component, After=True) |

Example:

import spacy

# Create the nlp object

nlp = spacy.load("en_core_web_sm")

# Define a custom component

def custom_component(doc):

# Print the doc's length

print('Doc length: ', len(doc))

# Return the doc object

return doc

# Add the component first in the pipeline

nlp.add_pipe(custom_component, first=True)

# Print the pipeline component names

print("Pipeline: ", nlp.pipe_names)

# Process the text

doc = nlp("Hello world!")

OUTPUT

Pipeline: ['custom_component', 'tagger', 'parser', 'ner']

Doc length: 3

Extension attributes

Let’s look at how to add custom attributes (meta data) to the Doc , Token and Span objects.

Custom attributes are available via ._ property. This is done to distinguish them from the built-in attributes.

Attributes need to be registered with the Doc,Token or Span classes using the set_extension method.

import spacy

# Import global classes

from spacy.tokens import Doc, Token, Span

# Set extensions on the Doc, Token and Span

Doc.set_extension('title', default=None)

Token.set_extension('is_color', default=False)

Span.set_extension('has_color', default=False)

doc._.title = 'My document'

token._.is_color = True

span._.has_color = False

Types of extension attributes:

- Attribute extensions – Set a default value that can be overwritten

Example:

# Set extension on the Token with default value

Token.set_extension('is_color', default=False)

doc = nlp("The sky is blue.")

# Overwrite extension attribute value

doc[3]._.is_color = True

- Property extensions – Work like properties in Python. Define a getter and an optional setter function.

Getter is only called when you retrieve the attribute value.

from spacy.tokens import Token

# Define getter function

def get_is_color(token):

colors = ["red", "yellow", "blue"]

return token.text in colors

# Set extension on the Token with getter

Token.set_extension("is_color", getter=get_is_color)

doc = nlp("The sky is blue.")

print(doc[3].text, '-', doc[3]._.is_color) # blue - True

Span extensions should almost always use a Getter

from spacy.tokens import Token

# Define getter function

def get_has_color(span):

colors = ["red", "yellow", "blue"]

return any(token.text in colors for token in span)

# Set extension on the Span with getter

Span.set_extension('has_color', getter=get_has_color)

doc = nlp("The sky is blue.")

print(doc[1:4]._.has_color, '-', doc[1:4].text) # True - sky is blue

print(doc[0:2]._.has_color, '-', doc[0:2].text) # False - The sky

-

Method extensions – Makes the extension attribute a callable method.

a. Assign a that becomes available as an object method

b. Lets us pass arguments to the extension function

from spacy.tokens import Doc

# Define method with arguments

def has_token(doc, token_text):

in_doc = token_text in [token.text for token in doc]

return in_doc

# Set extension on the Doc with method

Doc.set_extension('has_token', method=has_token)

doc = nlp("The sky is blue.")

print(doc._.has_token("blue"), '- blue') # True - blue

print(doc._.has_token("cloud"), '- cloud') # False - cloud

Scaling and performance

Processing lot of texts

Let’s look at tips and tricks to make spaCy pipelines run as fast as possible and process large volumes of text efficiently.

nlp.pipe:

a. Processes the texts as stream and yields Doc objects

b. Much faster than calling nlp on each text as it batches up the texts.

docs = list(nlp.pipe(LOTS_OF_TEXTS))

Passing in context

Option 1

- Setting

as_tuples=Trueonnlp.pipelets us pass(text, context)tuples - Yields

(doc, context)tuples - Useful for associating metadata with the

doc

import spacy

nlp = spacy.load("en_core_web_md")

data = [

('This is a text', {'id': 1, 'page_number': 15}),

('Add another text', {'id': 2, 'page_number': 16})

]

for doc, context in nlp.pipe(data, as_tuples=True):

print(doc.text, context['page_number'])

OUTPUT

This is a text 15

Add another text 16

Option 2

import spacy

from spacy.tokens import Doc

Doc.set_extension("id", default=None)

Doc.set_extension("page_number", default=None)

nlp = spacy.load("en_core_web_md")

data = [

('This is a text', {'id': 1, 'page_number': 15}),

('Add another text', {'id': 2, 'page_number': 16})

]

for doc, context in nlp.pipe(data, as_tuples=True):

doc._.id = context['id']

doc._.page_number = context['page_number']

Using only the tokenizer

# Converts text to doc before the pipeline components are called

doc = nlp.make_doc("Hello world!")

Disabling pipeline components temporarily

Pipeline components can be disabled using the nlp.disable_pipes context manager.

Example:

To only use the ner to process document, we can temporarily disable tagger and parser as follows:

# Disable tagger and parser

with nlp.disable_pipes('tagger', 'parser'):

# Process the text and print the entities

doc = nlp(text)

print(doc.ents)

The disabled components are automatically restored after the with block.